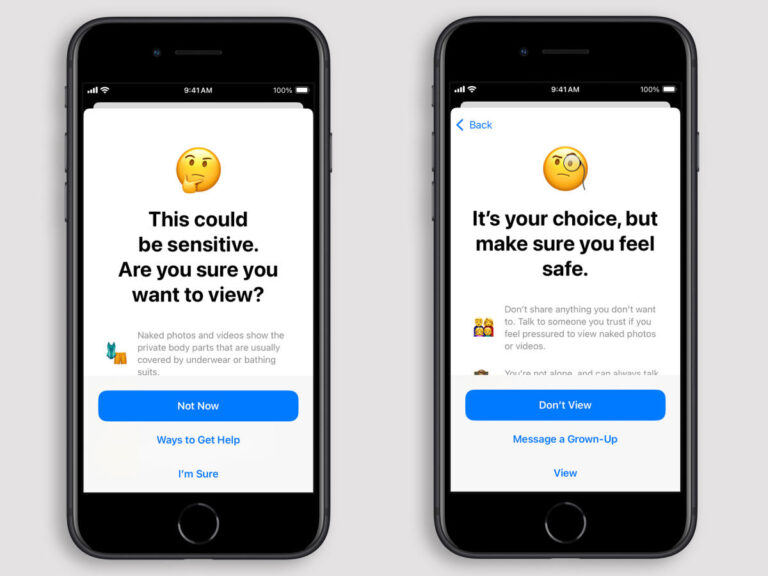

In iOS 18.2, Apple is adding a new feature that resurrects some of the intent behind its halted CSAM scanning plans — this time, without breaking end-to-end encryption or providing government backdoors. Rolling out first in Australia, the company’s expansion of its Communication Safety feature uses on-device machine learning to detect and blur nude content, adding warnings and requiring confirmation before users can proceed. If the child is under 13, they can’t continue without entering the device’s Screen Time passcode. If the device’s onboard machine learning detects nude content, the feature automatically blurs the photo or video, displays a warning that the content may be sensitive and offers ways to get help. The feature also displays a message that reassures the child that it’s okay not to view the content or leave the chat, with an option to message a parent or guardian. The feature analyzes photos and videos on iPhone and iPad in various apps, extending to other devices like Mac and Apple Watch. It requires iOS 18, iPadOS 18, macOS Sequoia, or visionOS 2. Apple plans to expand the feature globally after the Australia trial, addressing privacy and security concerns that arose from previous controversial attempts to police online sexual abuse. The feature can be activated through Settings > Screen Time > Communication Safety, with the section activated by default since iOS 17.